Sub-project

Research on Ultra-High-Dimensional Multimodal Foundation Models

Opening a new frontier in high-dimensional generative AI that precisely reflects the real world

As generative artificial intelligence becomes more advanced, there is a growing need for models that can comprehensively understand and reflect the physical laws of the real world, diverse sensor information, personal privacy, and high-dimensional time-series as well as 3D and 4D data. In response to this trend, Subproject 3 aims to develop a high-dimensional multimodal foundation model capable of interacting precisely with the real world.

This research seeks to establish core technologies for ultra-high-dimensional generative AI that span a wide range of domains, including imaging, healthcare, molecular structures, time-series data, and privacy protection. In particular, it emphasizes an integrated approach that incorporates various types of real-world sensor data, physical environments, human preferences, and ethical considerations.

KAIST is developing text-to-image generation models and 4D generation models based on Diffusion Transformers (DiT, Diffusion Transformer), using multimodal image–text datasets. To improve the quality of text inputs, prompt recaptioning techniques are being applied, and studies are simultaneously conducted on generating text, video, and medical data in conjunction with vision-language models. In addition, realistic 3D motion prediction technology and medical image generation models using high-field MRI data are being developed in parallel.

POSTECH is developing a 3D molecular structure generation model that reflects the physical laws of the microscopic world. To generate physically stable molecular structures, an interactive learning framework that communicates with an external simulator has been introduced, and reinforcement learning–based curriculum learning techniques are applied to further improve the model’s accuracy and safety.

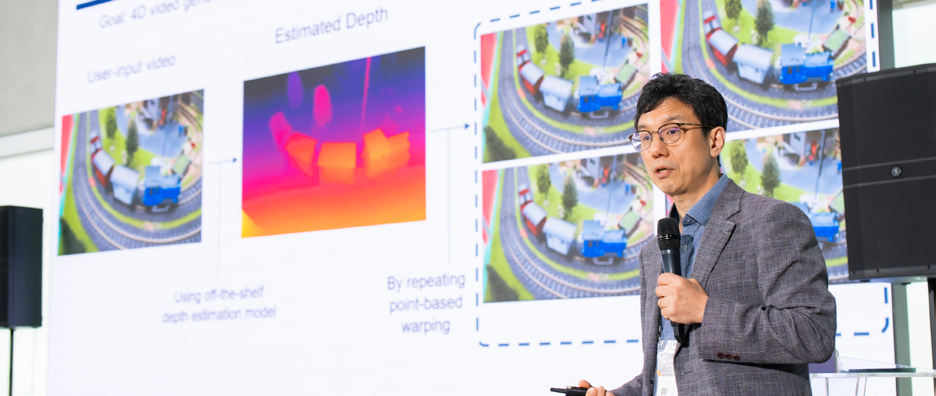

Yonsei University is developing practical generative AI models using reinforcement learning–based optimization methods such as RLHF and DPO, which incorporate user preferences and real-world requirements. By integrating image information from multiple viewpoints, the system performs precise 3D and 4D reconstruction even from unrefined video inputs, and focuses on designing architectures capable of preference-based reasoning in multi-object scenarios.

Korea University is developing an LLM-based architecture specialized for time-series generation, effectively applying domain-specific principles to data tokenization that captures temporal dynamics, time-series forecasting, and multivariate generation. In parallel, the team is implementing models capable of producing high-precision scientific data through SE(3)-equivariant neural networks that incorporate rotational and translational invariance, as well as Hamiltonian-based physical property prediction.

Meanwhile, privacy-preserving technologies applicable to real-world environments constitute another core area of this research. The team plans to build privacy-friendly generative AI through methods such as real-time concept removal based on Low-Rank Adaptation (LoRA), meta-learning–based one-shot privacy representation removal, and user-requested representation deletion.

In addition, development of a 4D generative foundation model that integrates both spatial and temporal dimensions is being carried out in parallel. This model extends beyond conventional 2D/3D generation by accounting for temporal variation and camera viewpoint changes, enabling the creation of high-dimensional data. Furthermore, the research is expanding toward designing predictable representations capable of capturing not only geometric structures but also semantic information.

This research is expected to be applicable across a wide range of fields, including precision medicine, drug discovery, robotics, smart manufacturing, and privacy-enhancing AI services. The development of high-performance foundation models capable of reflecting the complex structure of the real world as well as ethical constraints will form a crucial foundation for dramatically improving the reliability and applicability of generative AI.

Professor Ye Jong Chul of KAIST, the principal investigator of Subproject 3, expressed the following vision:

“Our research team is developing high-dimensional generation and inference technologies grounded in physical principles, focusing on the domains of imaging, bio, and urban systems. We are tackling various real-world problems through methods such as relative depth estimation using 2D tracks from monocular video, multi-property molecular generation based on BFN+DPO, pedestrian behavior generation using 3D semantic maps, and the Chain-of-Zoom technique, which enables up to 256× super-resolution. These approaches demonstrate broad potential applications in digital twins, drug discovery, urban planning, and high-resolution content generation.”